List and Comparison of the Most Popular On-premise and Cloud DevOps Tools:

Our last DevOps Series tutorial focused on Continuous Delivery in DevOps, let’s see about the best DevOps Tools.

In our Software Testing forum, we have seen several excellent tutorials on areas like Project Management, ALM, Defect Tracking, Testing, etc. along with the individual tools that are best in class in a particular segment or the appropriate area of SDLC.

I have written some tutorials on IBM and Microsoft ALM tools. But now my focus is on the general trend of today’s automation market.

Table of Contents:

- Top DevOps Tools – A Detailed Review

- List of the Best DevOps Tools

- Comparison of Top DevOps Software

- #1) Jira Service Management

- #2) Site24x7

- #3) ManageEngine Applications Manager

- #4) ActiveControl

- #5) Eclipse – IDE for Java/J2EE Development

- #6) SonarQube

- #7) JFrog Artifactory

- #8) IBM Urbancode Deploy

- #9) CA-Release Automation (RA)

- #10) Docker

- #11) Selenium

- #12) Nagios

- #13) Chef

- #14) Jenkins

- #15) Vagrant

- #16) Splunk

- #17) Git – Version Control Tool

- #18) Ansible

- #19) Prometheus

- #20) Ganglia

- #21) Snort

- #22) Pagerduty

- #23) Puppet

- #24) Gulp

- #25) Kamatera

- Conclusion

Top DevOps Tools – A Detailed Review

DevOps plays a vital role in providing automation in the area of Build, Testing, and Release to project teams which are normally termed today as Continuous Integration, Continuous Testing, and Continuous Delivery.

Hence, teams, today are looking at faster delivery, quick feedback from customers, providing quality software, less recovery cycle time from any crashes, and minimizing defects. from more and more automation. Thus one needs to ensure that with all the tools used and about the Integrations the Development and Operations team to collaborate or communicate better.

In this tutorial, I will provide some guidelines which according to me are the possible DevOps tools and scenarios that you could look to use for Java/J2EE projects for On-Premise and Cloud Deployments and most importantly how they could integrate and operate efficiently.

Illustrative DevOps Pipeline:

Let’s now see a larger picture of how all the tools that we discussed below integrate and give us the desired DevOps pipeline that the teams are looking for from an end-to-end automation point of view.

I have always believed that the process also plays a very important role in achieving the goals which I mentioned in the previous section. So it is not only tools that enable DevOps but a process like Agile also plays a very important role from the point of view of faster delivery.

List of the Best DevOps Tools

Here is a list of the top open-source free and commercial DevOps Tools available:

Comparison of Top DevOps Software

| DevOps tools | Best for | Platform | Functions | Free Trial | Price |

|---|---|---|---|---|---|

Jira Service Management | IT Operation teams, SMBs | Windows, Mac, iOS, Android, Web | ITSM | Free for up to 3 agents | Premium plan starts at $47 per agent. Custom enterprise plan also available |

| Site24x7 | Small to large businesses & freelancers. | Windows, Mac, Linux, Android, iPhone/iPad. | Monitoring tool | 30 days | Starter: $9/month, Pro: $35/month, Classic: $89/month, Enterprise: Starts at $225/month. |

| ManageEngine Applications Manager | Small to large businesses | Windows, Linux, Mac | Application monitoring | 30 days | Quote-based |

| ActiveControl | Medium to Large size businesses. | — | SAP DevOps & Test Automation. | No | Get a quote |

| Nagios | Small to Large businesses | Windows, Mac, Linux | Monitoring Tool. | Available | Nagios Core: Free Network Analyzer: $1995 Nagios XI: Starts at $1995 Nagios Fusion: $2495 |

| Chef | Small to Large businesses | Windows & Mac | Configuration Management Tool. | No | Effortless Infrastructure Essentials: $16500/Yr Enterprise: $75000/Yr Enterprise Automation Stack Essentials: $35000/Yr Enterprise: $150000/yr |

| Jenkins | Small to Large businesses & Freelancers. | Windows, Mac, Linux, FreeBSD, etc. | Continuous Integration tool. | — | Free |

Let’s review these tools in detail!

#1) Jira Service Management

Jira Service Management is a powerful and open tool that can be used by DevOps and business teams to implement the best ITSM practices like incident, change, problem, asset, and knowledge management. It is a tool that allows IT teams to configure automated rules to streamline repetitive tasks.

The software is effective at detecting incidents and taking appropriate measures to respond to them. It can minimize the risk associated with incidents with fast-track root cause analysis. Dev-Ops teams can also rely on this software to track and discover IT assets across the enterprise.

Features:

- Rapid Incident Management Response

- Gain Complete Visibility into IT Infrastructure

- Facilitates Team Collaboration

- Assess the performance of IT processes in real-time

- Automated Risk Assessment

- Asset Tracking and Discovery

Cost: Jira Service Management is free for up to 3 agents. Its premium plan starts at $47 per agent. A custom enterprise plan is also available.

#2) Site24x7

Site24x7 is a SaaS-based All-in-one monitoring solution. Site24x7 is used for monitoring websites, servers (both on the cloud and on-premises), applications, networks, and more.

Key Features:

- Monitor the performance of internet services like HTTPS, DNS server, FTP server, SSL/TLS certificate, and more from 110+ global locations (or via wireless carriers) and those within a private network.

- Stay on top of outages and pinpoint server issues with root cause analysis capabilities for Windows, Linux, FreeBSD, VMware, Nutanix, and Docker.

- Record and simulate multi-step user interactions in a real browser and optimize login forms, shopping carts, and other applications.

- Identify application servers and app components that are generating errors for Java, .NET, Ruby, PHP, Node.js, and mobile platforms.

- Comprehensively monitor critical network devices such as routers, switches, and firewalls.

- Get complete visibility across your cloud resources for platforms such as Amazon Web Services, Azure, GCP, and VMware.

- Gauge the application experience of real users across browsers, platforms, geographies, ISPs, and more.

- Manage your cloud cost and cut down spending on redundant cloud resources.

- Communicate downtime and promptly notify customers about your service status using our status pages.

- Manage your customers’ IT infrastructure efficiently for Managed Service Providers and Cloud Service Providers.

- Collect, consolidate, index, search, and troubleshoot issues using your application logs across servers and data center sites.

Cost: Starts from $9/month.

#3) ManageEngine Applications Manager

Applications Manager software can help organizations of all types automate and improve their DevOps process drastically. In doing so, the tool ensures the delivery of an optimal customer experience. It can monitor and troubleshoot most issues that affect DevOps with the help of code-level insights, application service maps, etc.

Features:

- Test the performance of critical user paths 24/7 on websites.

- Use actual traffic to monitor front-end performance.

- Gain total visibility into public, private, and hybrid cloud resources.

- Automated discovery and dependency mapping.

- Get to the bottom of DevOps issues with AI-assisted smart alerts.

- User-friendly dashboard to view stats.

- More than 500 pre-built reports for real-time and historical data analysis.

Cost: Contact for a quote

#4) ActiveControl

ActiveControl, from Basis Technologies, is only a part of the DevOps and test automation platform engineered specifically for SAP. It allows businesses to move their SAP applications from fixed release cycles to an on-demand delivery model based on CI/CD and DevOps.

What’s more, it means that SAP systems no longer need to operate as an island. With ActiveControl they can be integrated into cross-application CI/CD pipelines through tools like GitLab and Jenkins to co-ordinate and accelerate delivery of innovation.

Key Features:

- Automate more than 90% of manual effort, including build, conflict/dependency management, and deployment.

- Include SAP in cross-application CI/CD pipelines through integration with tools like GitLab and Jenkins.

- Shift quality left with 60+ automated analyzers that highlight risk, impact, and issues.

- Unique BackOut function rolls back deployments, minimizing Mean Time to Restore.

- Automates the management, alignment, and synchronization of changes between ECC and S/4.

- A Fully customizable approval process to suit any DevOps workflow.

- The central web dashboard enables collaboration between distributed teams.

- Comprehensive metrics (cycle time, velocity, WIP, etc) support continuous improvement.

- Automated code merge and conflict management for ‘N+N’ SAP project environments.

- A full audit trail enables straightforward regulatory compliance.

The Basis Technologies platform also includes Testimony, which supports the DevOps concept of shift quality left through a completely new approach to SAP regression testing.

#5) Eclipse – IDE for Java/J2EE Development

Typically, the developers write code and commit the code to a version control repository such as Git/GitHub which supports team development. Each developer will download the code from a version control repository, make changes, and commit the code back to Git/GitHub.

Any git commits will be integrated with Jira tasks/defects to see which files have changed, who has changed, and any commit comments. This will ensure the traceability of every code change a developer makes with the tasks or defects assigned to him.

Why do I like this tool – Many good IDEs can be used for Java/J2EE development. Eclipse is one such open-source and freeware tool that is used not only for Java/J2EE development but also contains plugins to support other languages like C/C++, Python, PERL, and Javascript to name a few.

Download specific versions for your projects from the website.

Website: https://www.eclipse.org/

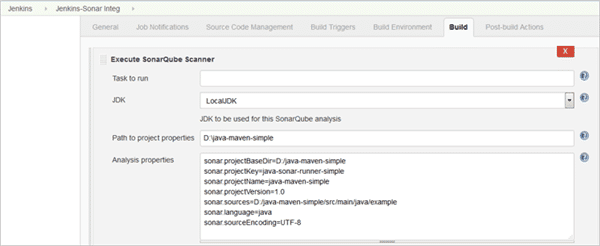

#6) SonarQube

SonarQube is an open-source tool that is mainly used for analyzing code quality.

Why I like this tool – It supports analyzing the code of many popular programming languages. The execution of code quality is called during the Jenkins build process and the results of any violations can be seen in the SonarQube dashboard. This will also help to review the code quickly.

Every time a developer makes a change to the source code, the Jenkins build process will trigger SonarQube and updates will instantly be available as part of the automation.

So organizations would need quality and reliable code thereby reducing bugs that could be costly later on in the lifecycle. This is where SonarQube plays a very important role in Code Quality.

Website: https://www.sonarqube.org/downloads/

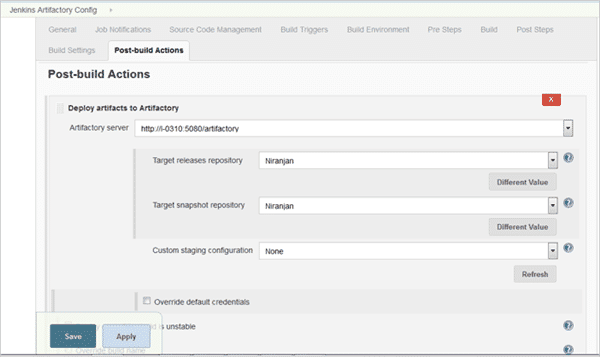

#7) JFrog Artifactory

We have seen that source code is stored in a version control tool like Git. The binary artifacts produced out of the build process are usually stored in repository managers like JFrog Artifactory.

Once the build is completed, the WAR/JAR/EAR files are copied and stored in Artifactory as part of the post-build action defined in Jenkins. Different versions of the artifacts are also maintained in the artifactory. This is very useful if ever there is a need to manually roll back any previous version.

These artifacts are then picked up for deployment to different app servers like Tomcat, JBoss, Weblogic, etc using tools like IBM Urbancode Deploy or CA – RA. Nexus Artifactory is also another popular repository manager tool that can be used. Click here to read more on Nexus.

Price: Different pricing options are available here for JFrog Artifactory (Pro, Pro Plus, Pro X, and Enterprise)

Example – Jenkins – Artifatory Integration

Website: https://jfrog.com/artifactory/free-trial/

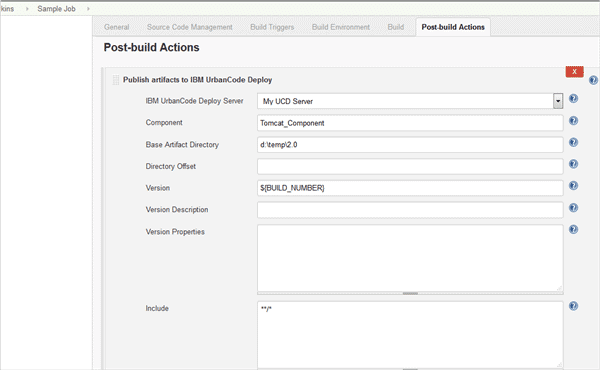

#8) IBM Urbancode Deploy

IBM Urbancode Deploy is a commercial tool from IBM that helps to automate the deployment of artifacts for different environments from development until production.

Post the build from Jenkins you can install the IBM Urbancode Deploy plugin and it will automatically trigger the deployment of the binary artifacts to different environments.

IBM Urbancode Deploy provides the following features to help with ease of deployment:

- Automated deployment and rollback

- Changes propagated to all environments including databases.

- Appropriate configuration for different environments.

- Approval process

- A visual depiction of the complete deployment process.

- Complete inventory maintained as we know what is deployed and who has deployed.

- Integration with multiple J2EE app servers like Tomcat, JBoss, Oracle Weblogic, IBM Websphere Application Server, etc., and IIS web server for .NET deployments.

- Integration with container platforms like Docker.

Example – Integration of Jenkins with IBM Urbancode Deploy

Example – Graphical view of application deployment to Tomcat

Get a free trial copy for evaluation purposes of IBM Urbancode Deploy. You will need to register for an IBM ID to download.

Website: https://www-01.ibm.com/marketing/iwm/iwm/web/preLogin.do?source=RATLe-UCDeploy-EVAL&S_CMP=web_dw_rt_swd

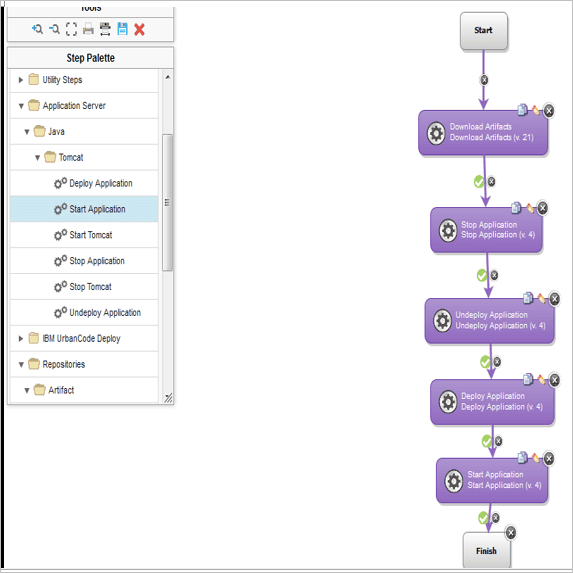

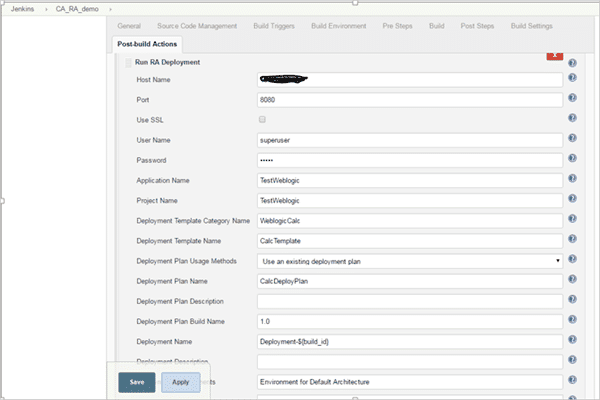

#9) CA-Release Automation (RA)

CA Release Automation is another similar commercial tool from Computer Associates that provides the above-mentioned features for automated application deployment.

So once the application is built successfully and a WAR file generated it is picked up as a part of Jenkins Post-build action and deployed to the target environment/app server as per the flow defined in CA RA.

The usual steps followed for any deployment either in IBM UCD or CA RA post the build from Jenkins are as follows and can be changed as per the requirements:

- Download the application WAR/JAR/EAR file to the target environment.

- Stop the current application from running.

- Un-install the application.

- Install the new version of the application by downloading it from Artifactory or Nexus.

- Start the application.

- Check the application status.

- If the application did not deploy successfully or may be due to environmental compatibility there can be a rollback action as well.

Example: Post-build Action Integration of Jenkins with CA RA

Contact the local IBM or CA team for pricing on IBM Urbancode Deploy or CA RA.

#10) Docker

We all talk about Virtualization where one physical server provides a feature to host or contain multiple virtual machines. These VMs can run either Windows or Linux operating systems and have all the libraries or binaries and applications that run on them.

Typically every VM is very large. From a DevOps point of view, you can use every VM for a particular environment like Dev, QA PROD, etc. With VMs, the entire hardware is virtualized. Docker uses the concept of Containers which virtualizes the Operating System.

Why I like this Tool – You can use Docker to package the application (e.g. WAR file) along with the dependencies to be used for deploying in different environments.

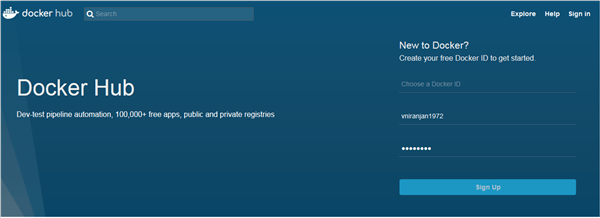

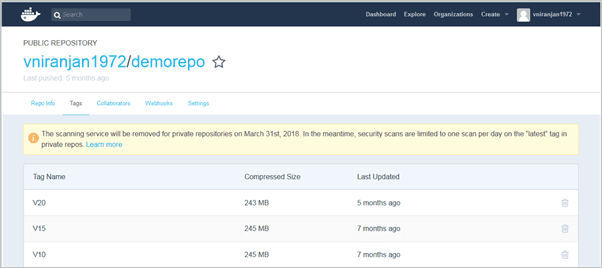

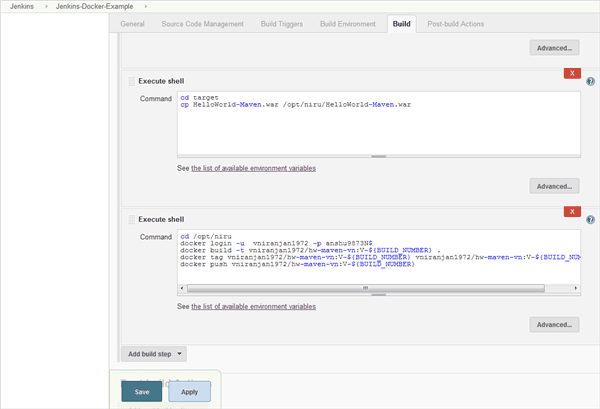

It uses the workflow of BUILD-SHIP-RUN which means you create images (based on Dockerfile), publish the images (to DockerHub), and run the image by which the container is created in any environment.

DockerHub is a registry of images built by communities and can store or upload the images you build as well.

Image repository with Tags

Sample Dockerfile to automate the deployment of WAR files to Tomcat

FROM tomcat copy sample.war /usr/local/tomcat/webapps/ CMD [“catalina.sh”,”run”]

Jenkins integration with Docker

Website: https://hub.docker.com/

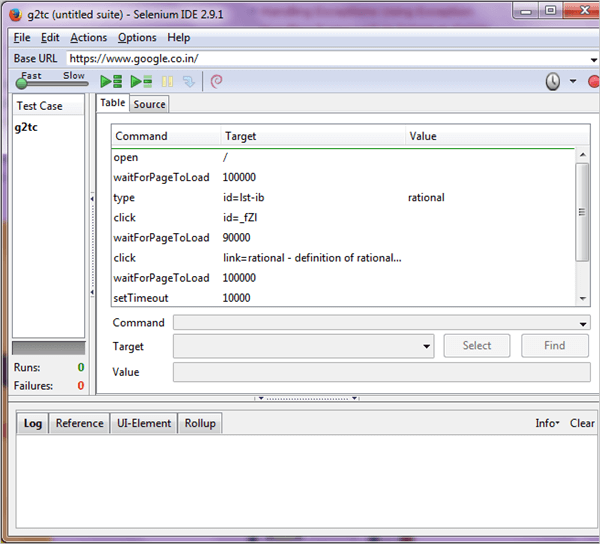

#11) Selenium

Selenium is a free open-source automated functional testing tool to test web applications. It is normally installed as a Firefox browser plugin and helps to record and playback of test scenarios. In the DevOps cycle, once the application is deployed in the Test or QA environment selenium automated testing is invoked.

Why I like this tool – Selenium as a tool is very easy to learn. I would suggest reading the Selenium tutorial series to understand the installation process for the Firefox browser, gain knowledge, and master automated testing for web applications. Visit its website to read more on Selenium features and downloads.

Website: http://www.seleniumhq.org/

#12) Nagios

Tool Name: Nagios Core

It is an open-source tool. This tool is written in C language. It is used for network monitoring, server monitoring, and application monitoring.

Key Features:

- Helps in monitoring Windows, Linux, UNIX, and Web applications.

- It provides two methods for Server monitoring i.e. agent-based and agentless.

- While monitoring the network, it checks network connections, routers, switches, and other required things.

Cost: Free.

Companies using the tool: Cisco, Paypal, United Health Care, Airbnb, Fan Duel, etc. It has more than 9000 customers.

Download Link: https://www.nagios.org/downloads/nagios-core/

#13) Chef

Tool Name: Chef DK

This tool is used for checking the configurations that are applied everywhere and also helps in automating the infrastructure.

Key Features:

- It ensures that your configuration policies will remain flexible, versionable, testable, and readable.

- It helps in standardizing and continuously enforcing the configurations.

- It automates the whole process of ensuring that all systems are correctly configured.

Cost: Free

Companies using the tool: Facebook, Firefox, Hewlett Packard Enterprise, Google Cloud Platform, etc. It has many more customers.

Download Link: https://downloads.chef.io/chefdk/3.0.36

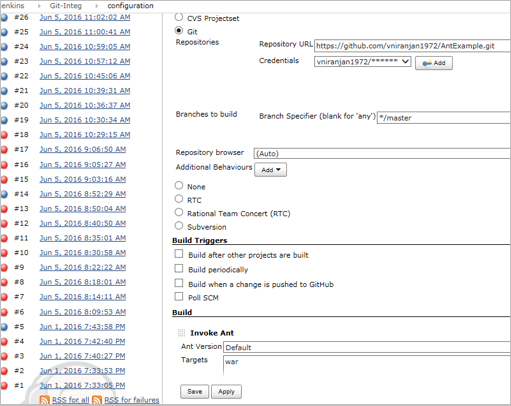

#14) Jenkins

Tool Name: Jenkins

Jenkins is an automation server. It is an open-source tool written in Java. It helps many projects in automating, building, and deploying.

Jenkins is a free open source Continuous Integration tool or server that helps to automate the activities as mentioned of build, code analysis, and storing the artifacts. These activities are triggered once a developer or team commits the code to the version control repository.

So the schedule for the build is defined in Jenkins for initiating it. E.g. it could be once every 2 to 3 days or every Friday at 10 PM etc. Again, this depends on the completion of tasks assigned to individual developers and the due date for completion. This is all planned in the Sprint Backlog in JIRA as discussed initially.

Key Features:

- It helps in distributing the work on multiple machines and platforms.

- Jenkins can act as a continuous delivery hub for the projects.

- Supported operating systems are Windows, Mac OS X, and UNIX.

Why I like this tool – Jenkins has several plugins and works as a CI tool for various technologies like C/C++, Java/J2EE, .NET, Angular JS, etc.

It also provides plugins to integrate with SonarQube for code review, JFrog Artifactory for storing binary artifacts, testing tools like Selenium, etc. as part of the automation process and reduces manual intervention as much as possible.

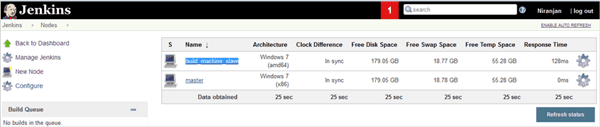

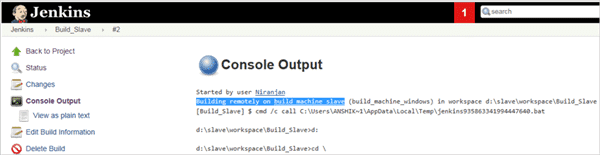

All builds running on a single-build machine are not a good option. Jenkins provides you with a Master-Slave feature where the builds are distributed, run in different environments, and can take a load of the master server.

Jenkins helps visualize the entire build/delivery process steps as a pipeline. It can also help automate deployments to app servers like Tomcat, JBoss, and Weblogic through plugins and also to container platforms like Docker.

I prefer deployments to be done with a proper deployment tool like IBM Urbancode or CA RA with its integration with Jenkins.

Many organizations use Jenkins today to automate the entire build process, maintain a separate central server, and facilitate the deployment as well.

Cost: Free

Example – Jenkins configuration with Git

Example – Jenkins Delivery Pipeline

Example – Jenkins Master Slave

Companies using the tool: Capgemini, LinkedIn, AngularJS, Open stack, Luxoft, Pentaho, etc.

Download Link: https://jenkins.io/download/

#15) Vagrant

Tool Name: Vagrant

Vagrant is developed as open-source software by HashiCorp. It is written in Ruby. By managing the development environment it helps in the development of software.

Key Features:

- Supported operating systems are Windows, Mac OS, Linux, and FreeBSD.

- Simple and easy to use.

- It can be integrated with an existing configuration management tool like Chef, puppet, etc.

Cost: Free

Companies using the tool: BBC, Disqus, Mozilla, Edgecast, Expedia, Oreilly, yammer, nature.com, LivingSocial, ngmoco, and Nokia, etc.

Download Link: https://www.vagrantup.com/downloads.html

#16) Splunk

Tool Name: Splunk Enterprise/ Splunk Cloud/ Splunk Light/ Splunk Free

Splunk is a software platform that converts machine data into valuable information. For this, it gathers data from different machines, websites, etc. Splunk is headquartered in San Francisco.

Key Features:

- Splunk Enterprise will help you aggregate, analyze, and find answers from your machine data.

- Splunk Light provides features for small IT environments.

- With the help of Splunk Cloud, Splunk can be deployed and managed as a service.

Cost:

Splunk Free: Free

Splunk Light: Starts from $75

Splunk Enterprise: Starts from $150

Splunk Cloud: Contact them for pricing details.

Companies using the tool: HYATT, Coca-Cola, Zillow, Discovery, Domino’s, e-Travel, pager duty, and many more customers.

Download Link: https://www.splunk.com/en_us/download.html

#17) Git – Version Control Tool

One of the fundamental building blocks of any CI setup is to have a strong version control system. Even though there are different version control tools in the market today like SVN, ClearCase, RTC, and TFS, Git fits in very well as a popular and distributed version control system for teams located at different geographical locations.

It is a free and open-source tool and supports most of the version control features of check-in, commits, branches, merging, labels, push and pull to/from GitHub, etc.

It is pretty easy to learn and maintain for teams initially looking at a tool to version control their artifacts. Many websites show how to learn and master Git. You can click here for such a website to read and gain knowledge.

For a distributed setup of maintaining your source code and other files to be shared with your teams, you will need to have an account with an online host service- GitHub.

Though I have suggested Git it is up to the teams and organizations to look at different version control tools that fit in very well in their setup or based on customer recommendation in a DevOps pipeline.

Git can be downloaded for Windows, macOS, and Linux from https://git-scm.com/downloads

#18) Ansible

Tool Name: Ansible

This open-source tool provides software-related services like application deployment, configuration management, etc.

Key Features:

- It provides agentless architecture.

- It is powerful because of workflow orchestration.

- It is simple and easy to use.

Cost: Free

Companies using the tool: Cisco, DLT, Juniper, and hundreds of other customers.

Download Link: https://docs.ansible.com/ansible/latest/installation_guide/intro_installation.html

#19) Prometheus

Tool Name: Prometheus

Description: It is an open-source tool that monitors and gives alerts.

Key Features:

- It has a multi-dimensional data model.

- It has a flexible query language.

- It uses the intermediary gateway for pushing time series.

- It provides graphs in multiple modes.

Cost: Free

Companies using the tool: Ericsson, Maven, Jodel, Quobyte, Show Max, Argus, SoundCloud, and many more customers.

Download Link: https://prometheus.io/download/

#20) Ganglia

Tool Name: Ganglia

It is an open-source monitoring system for clusters and grids.

Key Features:

- It can be scalable to handle clusters with 2000 nodes.

- It uses technologies such as XML, XDR, portable data transport, and RRD tools.

- It uses well-defined data structures and algorithms.

Cost: Free

Companies using the tool: Twitter, Flickr, Last.fm, Dell, Microsoft, Berkeley, Cisco, Motorola, and many more users.

Download Link: http://ganglia.info/?page_id=66

#21) Snort

Tool Name: Snort

This system was developed by Cisco Systems for finding network intrusions.

Key Features:

- Protocol Analysis

- Content Searching and Matching

- Real-time traffic analysis

Cost: Free

Companies using the tool: It has more than five lakh registered users and millions of users have downloaded Snort.

Download Link: https://www.snort.org/downloads

#22) Pagerduty

Tool Name: Pagerduty

It is a SaaS product for incident response. It was founded in 2009.

Key Features:

- Sends Email notifications, SMS, or phone notifications.

- It can be integrated with monitoring and security tools.

- It can set permissions for both user and team-based.

Cost: It has four pricing plans – Lite, Basic, Standard, and Enterprise. All plans will be billed annually.

Lite: $9 per user per month

Basic: $29 per user per month

Standard: $ 49 per user per month

Enterprise: $99 per user per month

Companies using the tool: Comcast, Google, Credit Suisse, Staples, GAP, eBay, and Panasonic. It has more than ten thousand customers.

Download Link: https://www.pagerduty.com/sign-up/

#23) Puppet

Tool Name: Puppet

It is an open-source tool. While developing the software this tool will ensure that all the configurations are applied everywhere. It is a configuration management tool.

Key Features:

- It can work for hybrid infrastructure and applications.

- Provides Client-server architecture.

- Supports Windows, Linux, and UNIX operating systems.

Cost: Free

Companies using the tool: Cisco, Scripps Networks, Teradata, and JP Morgan Chase &Co.

Download Link: https://www.puppet.com/community/open-source/free-trial

#24) Gulp

Tool Name: Gulp.js

This javascript toolkit automates the difficult task of a development process.

Key Features:

- Easy to use.

- Simple plugins to work as per the expectations.

- Forms the builds faster by not writing the intermediary files to the disk.

Cost: Free

Companies using the tool: More than 1000 companies are using this toolkit. It is installed by more than one lakh users.

Download Link: https://github.com/gulpjs/gulp/blob/v3.9.1/docs/getting-started.md

#25) Kamatera

Tool Name: Kamatera

Kamatera is a Top Tool for the Cloud Application Deployment.

Cloud computing offers many benefits to application developers. You should take advantage of and choose a cloud provider that enables you to deploy the applications across multiple locations worldwide for a fast and responsive experience for the application.

Here is the best service provider to deploy the most popular applications on cloud infrastructure for free.

Deploy in seconds the most popular application in the Cloud for Free. No Setup fee and no commitment, Cancel any time.

Just select an application you want to deploy from a list of the most popular applications like:

CPanel, Docker, DokuWiki, Drupal, FreeNAS, Jenkins, Joomla, LEMP, Magento, Memcached, Minio, MongoDB, NFS, NextCloud, OpenVPN, Redis, Redmine, Tomcat, WordPress, Zevenet, MySQL, node.js, pfSense, phpBB, phpMyAdmin

#26) Buddy

Tool Name: Buddy

Buddy: Testing doesn’t have to be a tedious chore! Thanks to over 100+ predefined actions, Buddy turns CI/CD into a breeze. Try the most intuitive DevOps tool on the market for FREE!

- Ready to use actions

- Changeset-based executions

- Attachable microservices

- Real-time progress monitoring

- Multi-repository workflows

- IaaS and AWS deployments

- Performance and app monitoring

Conclusion

The purpose of this tutorial was to introduce you to the main DevOps tools and services used for On-Premise and Cloud deployment.

It was to provide the enthusiasts of DevOps with the popular tools that are available and how they integrate with one single view of automation and not much manual intervention.

I also wanted to mention about few other DevOps Software that are equally popular like BitBucket (Web-based version control repository similar to GitHub but owned by Atlassian), Bamboo (Continuous Integration and Continuous deployment tool similar to Jenkins developed by Atlassian), Chef/Puppet/Ansible (Managing infrastructure and Application deployment).

Our upcoming tutorial will explain to you all about the Installation and configuration of commonly used open-source DevOps tools.