Deeply understand the ethics of Facial Recognition Technology from our step-by-step guide. Unveil the legal risks and implications of facial recognition technology that everyone needs to know:

The rise of Facial Recognition Technology has been rapid and pervasive around the globe. Corporations, governments, and law enforcement agencies have either embraced this tech completely or are in the process of deploying it to serve a wide range of purposes.

While the development of FRT has been swift, the legislative landscape surrounding it can be described as vague at best. It is like governments and regulatory bodies are struggling to catch up with FRT’s growing relevance in modern society.

Table of Contents:

- Is Facial Recognition Ethics Legal: Complete Guide

- Facial Recognition Technology and Its Applications in Modern Society

- Ethics of Facial Recognition Technology

- Addressing the Ethical Issues of Facial Recognition

- The Legislative Landscape for FRT

- Two Use-Cases of Corporations Endorsing the Ethical Use of Facial Recognition

- Implications of FRT in Health-Care Settings

- Ethical Issues with Implementation of Emotion Recognition Tech

- Practical Solutions to Enforcing Data Deletion and Right-to-Be-Forgotten Policies

- Frequently Asked Questions

- Conclusion

Is Facial Recognition Ethics Legal: Complete Guide

This has made some folks legitimately concerned about its ethical implications. There has been a long track record of law enforcement operatives using tech to encroach on their citizens’ privacy.

Check the video below to know the risks and ethical implications of Facial Recognition Tech:

There’s already evidence of that happening with Facial Recognition Tech, with several examples of identity fraud, mass surveillance, and racial profiling coming into light.

[Via NEC]

As such, I believe it is high time we indulge in discourse about FRT and answer some burning questions around its ethical use.

So, without much further ado, let’s dive in.

Facial Recognition Technology and Its Applications in Modern Society

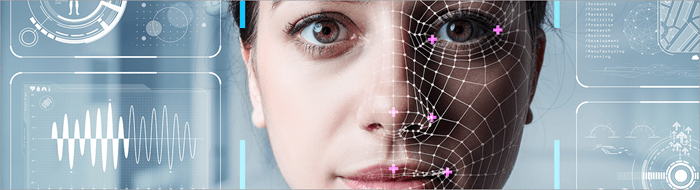

Facial Recognition Tech can be defined as a piece of biometric technology that’s used to map an individual’s face for identification.

The way it works is quite simple to comprehend. All forms of Facial Recognition Tech involve a camera that captures an individual’s face. The software then maps the captured facial features down to the minutest details.

[Via Pexels]

The tech deliberately maps everything, from the jawline to the nose, and even the space between the eyebrows. Then, the tech converts the results into a unique numerical code, also known as the face print.

The tech then compares this faceprint against a database and ultimately offers a formal identification of the individual.

Key examples of FRT include:

- Bypassing a phone’s lock through Face ID.

- Cops identify suspects with surveillance footage.

- Airports are using facial recognition for passenger screening.

- Businesses use facial recognition to grant access to authorized personnel.

- Online services use it for the safe onboarding of their users.

On the surface, facial recognition technology can be a force for good. In the wrong hands, however, this tech can become a tool for exploitation.

For instance, the very tech being used by law enforcement operatives to identify suspects can also profile citizens racially. The very tech being used by governments to maintain law & order can also be used by repressive regimes for mass surveillance.

FRT is a double-edged sword. Without a proper regulatory framework in place, I would say that having ethical concerns over its potential misuse by entities in positions of power is justified.

Ethics of Facial Recognition Technology

We are living in a time where critics no longer have to rely on mere assumptions to voice their concerns over the use of FRT. We now have several stories and real-world examples of law enforcement operatives, repressive regimes, and malicious individuals abusing the tech to serve their own ulterior agendas.

Below is a comprehensive list of all facial recognition ethical issues that have come into the spotlight recently.

| Ethical Issue | Who it Harms | Consequences |

| Racial Profiling | People of color, women, and the elderly | Wrongful incarceration, biased policing, systemic racism. |

| Mass Surveillance | Journalists, Activists, General Public, Protestors | Targeted harassment, free speech violation, and crushing of civil liberties. |

| Data Privacy | Consumers, General public | Unauthorized access to personal data, involuntary surveillance. |

| Data Breach | Government Agencies, Corporations, Consumers | Identity theft, risk of deepfake circulation, and Unauthorized access to sensitive data. |

#1) Racial Profiling and Discrimination

We’ve had countless studies since the dawn of Facial Recognition Tech testing its accuracy. A majority of these studies have found that the systems in place today are biased, especially towards people of color, the elderly, women, and communities overrepresented in policing databases.

A test conducted by the federal government in 2019 showed that the system worked best when identifying white middle-aged men. It failed miserably in accurately identifying women, children, the elderly, and people of color. These false positives have led to law enforcement operatives targeting and wrongfully arresting people of color.

A report published by the ACLU found that the error rate produced by this system for white men was significantly lower at 0.8% while being higher for dark-skinned women at 34.7%.

This blows apart the misconception that technology is infallible and therefore unbiased. Tech is created by people, and people aren’t infallible. People are biased. So, it makes perfect sense that their creation would be biased, too.

#2) Mass Surveillance

In the year 2021, a group of 51 organizations wrote an open letter to EU commissioners. They requested a complete ban on all facial recognition tech designed to spy on citizens. Their concerns stemmed from the very legitimate possibility of law enforcement bodies and regimes using this system for mass surveillance.

Since its inception, various government bodies have justified using FRT to maintain law and order. However, there are just too many stories out there that depict facial recognition being used to trample fundamental civil liberties like the right to protest.

In 2020, US law enforcement bodies used facial recognition to monitor Black Lives Matter protests. This led to several activists being targeted at their homes. The London Metropolitan Police also confessed to using FRT to monitor thousands of people who were attending the coronation of King Charles III in 2023.

In the hands of repressive regimes, mass surveillance via facial recognition can take a dark turn. Several activists, journalists, and people belonging to marginalized communities have been bullied, harassed, and arrested for simply voicing their dissent.

#3) Data Privacy

With Facial Recognition Tech being used in public spaces, many people have no idea they are being watched or monitored. Simply put, you are being tracked, your face is being mapped, and processed in a database without your explicit consent. This is your data that was collected by a third party without your knowledge.

You also have no say in how this data is used, who owns it, or how it is stored. We’ve already seen stories where images of popular celebrities were scanned to create inappropriate deep-fakes. Somebody could already be using your image to test facial recognition models without your knowledge or consent.

You have law enforcement bodies tracking your movements using cameras installed in public spaces. This level of cyber-stalking could prove dangerous for folks like those going to an abortion clinic, organizing protests, etc.

#4) Data Breach

Privacy concerns with regard to data breaches have always been the talk of the town. However, things get even more serious when it comes to the breach of facial data. If you suspect your password was compromised, you can change it without hesitation. You cannot change your face if your facial data was breached.

In January 2024, a finance employee working for the firm Arup was scammed out of $25 million after attending a video call with a person fraudulently posing as a CFO using deepfake technology.

Cases of identity fraud, such as the one we just discussed, are on the rise. Scammers and hackers can easily bypass your phone’s FACE ID and gain access to sensitive information using deepfake tech.

A data breach caused by facial recognition tech can be challenging to mitigate. Clear, robust regulations are the only way to address these challenges.

Addressing the Ethical Issues of Facial Recognition

It should be clear to you by now that the rise of facial recognition technology has raised some legitimate ethical issues. Critics and activists are right in their demands for tighter legal frameworks that ensure accountability.

Accountability in the context of FRT would mean a scenario where governments and corporations are obligated to justify their use of this technology and take responsibility for its abuse, if any.

Perhaps we can take the right step towards this path by raising and addressing the following questions:

- Who should be in charge of developing, purchasing, and testing facial recognition systems?

- What purposes would justify using facial recognition to capture an individual’s facial data?

- What parameters should be met when building facial databases, and how should their purpose be evaluated?

- What consents, notices, checks, and balances should be in place for the ethical accrual of facial data?

- What accountability should be in place to address different misuses of facial recognition tech?

- How should accountability tactics be exercised based on varying circumstances?

- How to make the complaints-registering accessible to all?

- Should law enforcement and audit systems be challenged with counter AI initiatives?

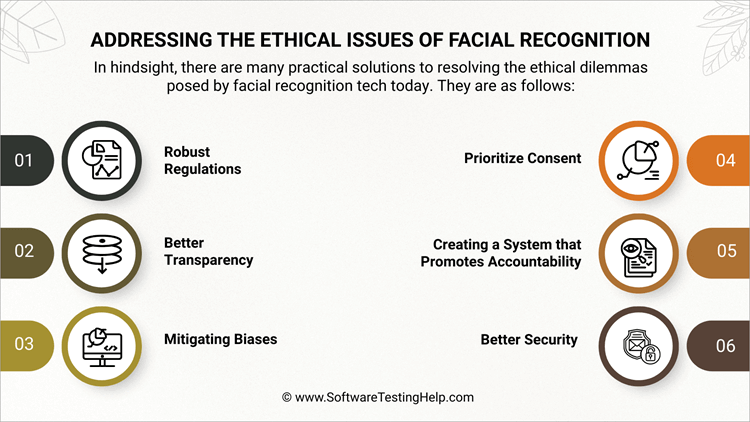

In hindsight, there are many practical solutions to resolving the ethical dilemmas posed by facial recognition tech today. They are:

#1) Robust Regulations

As I mentioned before, laws and regulations involving the deployment of FRT are either vague or weak. Governments worldwide must step up and introduce stronger regulations to rein in this tech.

Clear, robust legal frameworks that dictate how and where facial recognition can be used, especially in relation to surveillance, policing, and data collection, must be introduced urgently.

#2) Better Transparency

It should be mandatory for any entity rolling out FRT, whether it is public or private, to be transparent with the general public.

This means making it obligatory for governments and private entities to disclose what data is collected, why it is collected, and how it is used.

#3) Mitigating Biases

The use of FRT has put certain groups in our community, particularly people of color, women, and the elderly, at a disadvantage. Studies have shown facial recognition to be biased, leading to the targeting, racial profiling, and wrongful arrest of minorities.

This can be reversed by training a facial recognition tech on diverse data sets and using bias testing standards.

#4) Prioritize Consent

The general public should be given a choice to willingly participate in facial recognition systems. No public or private entity should be allowed to capture an individual’s facial data without their knowledge and consent. All citizens are entitled to know when their facial data is captured and how it is being used.

#5) Creating a System that Promotes Accountability

Misuse or abuse of tech like facial recognition should not go unpunished. It is the responsibility of governments and corporations to have independent oversight groups and ethics boards in place to monitor the deployment and use of FRT.

These bodies will investigate the abuse of FRT and ensure their use complies with established ethical regulations. If instances of abuse are found, it will be up to these regulatory bodies to take any action they deem appropriate.

#6) Better Security

As I mentioned before, it’s challenging to mitigate the risk of facial-data breaches. As such, facial recognition software must have robust security features. This means stronger encryption, stricter access controls, and restricted data retention. This should prevent identity theft.

The Legislative Landscape for FRT

Given below is the legislative landscape for Facial Recognition Technology in the USA and Europe.

In the USA

In America, the legal framework involving FRT can be best described as fragmented. FRT isn’t federally regulated. So you have differing laws that vary from state to state and city to city. Multiple attempts have been made in the past to pass a federal regulation for FRT, but none have succeeded.

In the absence of a uniform federal law, many states have taken matters into their own hands and passed various laws in the interest of their citizens. Several states have enforced strict biometric privacy laws, with Illinois having the strictest regulations on this matter.

Under Illinois’ biometric privacy laws, public and private entities are required to get written consent from individuals before collecting their data. They are also prohibited from selling this data for profit. Individuals may sue for violating these laws.

Texas and Washington have considerably more lenient laws regarding biometric data. Similarly, California, Virginia, and Colorado also have laws that treat biometric data as sensitive information.

You’ll also find bans or moratoriums on the use of FRT by police or government bodies in cities like Boston, San Francisco, and Portland. In Maine, government agencies are restricted from using FRT unless it is to investigate a serious crime.

In Washington, agencies using FRT are required to hold community meetings regularly and provide accountability reports. In Virginia, law enforcement agencies should prove reasonable suspicion to use FRT. The technology being used should meet the accuracy threshold of 98%, thus significantly mitigating the risk of racial profiling.

In the EU and the UK

The data protection laws governing the use of FRT are far more comprehensive in the EU and the UK than they are in the USA. That said, the UK’s laws have become more fragmented since it departed from the EU.

In EU countries, the GDPR (General Data Protection Regulation) is the body regulating the use of FRT and AI technology. In 2024, the EU introduced the AI Act, which straight-up bans the use of live facial recognition in public spaces. It also bans facial recognition tech from indiscriminately scraping facial data from online sources.

That said, there are a few exceptions. AI-driven facial recognition can conduct a targeted search for terrorism and kidnapping suspects. It is also mandatory for law enforcement operatives using this tech to conduct a fundamental rights impact assessment.

I believe this act is quite effective in addressing the concerns many people have with AI facial recognition ethics.

In the UK, the GDPR and the Data Protection Act of 2018 regulate FRT. Under the laws dictated by these bodies, companies must have a “lawful basis”, and law enforcement agencies should have a “law enforcement basis” to use or process someone’s biometric data. Oversight on this matter is generally provided by the Information Commissioner’s Office.

While the EU has specific laws in place to regulate the use of facial recognition, the UK does not. The UK also seems far more enthusiastic in rolling out facial recognition for policing.

Two Use-Cases of Corporations Endorsing the Ethical Use of Facial Recognition

While governments struggle with implementing a strong legal framework to control the use of facial recognition systems, some companies are far ahead of the curve. I want to shed light on two such use cases that might encourage other prominent players to finally step up.

#1) IBM

IBM seems to have taken the ethical concerns raised by critics of FRT very seriously. The restrictions it has imposed on the sale of its facial recognition technology evidence this. Recently, IBM made specific recommendations to the US Department of Commerce to make sure its tech does not fall into the wrong hands.

In a bid to end mass surveillance and racial profiling, IBM proposed restrictions on FRT that employ “1-to-many” facial-data matching. It has proposed restrictions on certain foreign governments acquiring that would prevent them from acquiring cloud computing components with integrated FRT.

It also wants to limit access to online image databases, which could be used to train “1-to-many” FRT systems. Furthermore, they’ve proposed using the Wassener Accords to prevent repressive regimes from acquiring FRT systems.

#2) Microsoft

Microsoft isn’t far behind in making sure its facial recognition systems are being used ethically. Their training materials and resources now include information on how their technology can be used ethically.

Microsoft’s team is hard at work to develop FRT systems that are highly accurate and capable of recognizing faces across varying skin tones and ages. Microsoft has also advocated hiring third-party entities to independently test FRT systems to eliminate the risk of discrimination and bias before they are launched to the general public.

Implications of FRT in Health-Care Settings

Facial Recognition Technology will have a prominent role to play in helping healthcare institutions identify, monitor, and diagnose their patients. Healthcare institutions will need informed consent from their users to get the data necessary for facial recognition. Some experts are reasonably concerned about whether this data can be anonymized at all.

Once the data is obtained, there is always a risk of its falling into the wrong hands. Healthcare institutions will face another challenge as FRT evolves to become capable enough at detecting a wider range of health conditions, which involve behavioral and developmental disorders as well.

Both software developers and healthcare institutions will need to decide which kinds of conditions the FRT system will include and whether they will inform patients of incidental findings.

There is no doubt in my mind that FRT systems will evolve and become a significant aspect of healthcare services in the future. However, it should not replace a physician’s judgment. FRT systems should not be used to monitor compliance or track a patient’s whereabouts without their informed consent.

Healthcare institutes will also need to obtain approval from bodies like HIPAA before implementing such systems. The FRT system can do a lot of good. However, its implementation should be conditional on a patient’s voluntary consent. Patients should know what data is being collected and how it will be used.

Ethical Issues with Implementation of Emotion Recognition Tech

The ethical implications of emotion recognition tech are far more concerning than your average FRT systems. This is because an ERT is founded on the flawed notion that emotions are expressed by people solely through their facial expressions. Most systems employing ERT do not have the informed consent of their users to map their facial emotions.

As such, many users aren’t aware that their emotions are being monitored. There is also a risk of such systems using emotional data for exploitative purposes. When employed by authoritarian regimes, their use can be even more concerning.

These regimes could use ERT to monitor people’s emotions in public spaces, leading to a violation of one’s emotional privacy. There is also a chance of this tech misinterpreting somebody’s emotions, leading to their unfair persecution.

We need better regulations that take emotional privacy into account. These regulations are currently lacking. Governments need to step up and implement regulations that can hold organizations accountable for exploiting their customers’ emotional data.

Software developers and companies must be transparent about their use of ERT and must obtain informed consent from their users.

Practical Solutions to Enforcing Data Deletion and Right-to-Be-Forgotten Policies

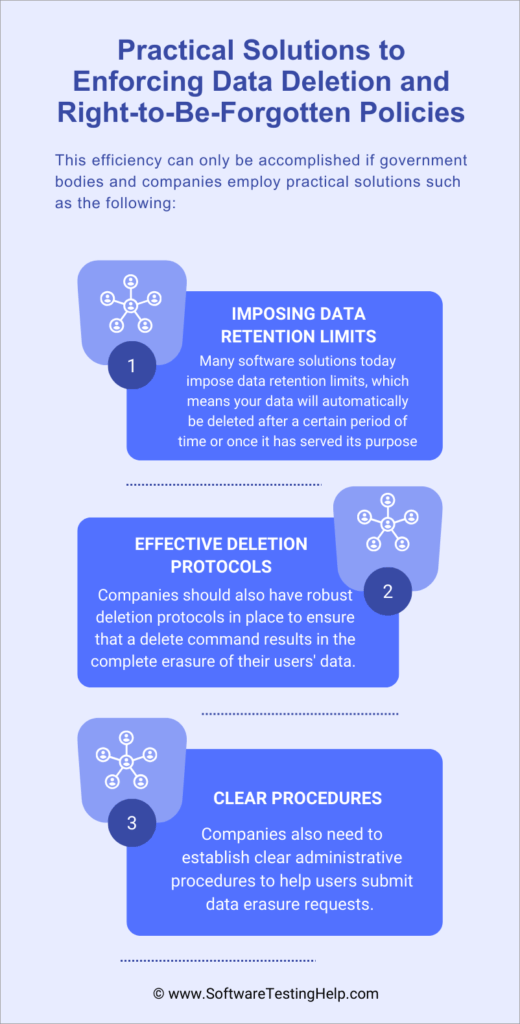

According to prominent regulatory bodies like the GDPR, everyone is entitled to have their data completely erased from a company’s database. When someone submits a request to erase their data, there should be a system in place to ensure this is done swiftly and with the utmost efficiency.

This efficiency can only be accomplished if government bodies and companies employ practical solutions such as the following:

#1) Imposing Data Retention Limits

Many software solutions today impose data retention limits, which means your data will automatically be deleted after a certain period of time or once it has served its purpose. In certain situations, companies can impose time-bound retention limits. For example, a company erases all biometric data related to its employees once they’ve quit the job.

Automated processes can also help with regular database reviews to find out and remove data that is no longer required. Organizations should also put a system in place wherein account deletion automatically leads to all data and facial biometrics related to that account also being deleted.

#2) Effective Deletion Protocols

Companies should also have robust deletion protocols in place to ensure that a delete command results in the complete erasure of their users’ data. There are techniques like data overriding and degaussing that can do this. Organizations should also maintain a detailed audit trail that tracks all deletion actions.

#3) Clear Procedures

Companies also need to establish clear administrative procedures to help users submit data erasure requests. Make the process accessible by helping users submit these requests via email or forms. A company’s staff must also be trained well to identify and process erasure requests efficiently.

You also need to implement a secure verification process to verify the identity of the person submitting the erasure request. This will ensure there isn’t any unnecessary data breach or loss caused by malicious individuals.

Frequently Asked Questions

1. What is facial recognition used for?

Facial recognition can be used for a variety of purposes by both public and private entities.

Its most prominent applications are as follows:

1. Unlocking Smartphones through Face ID.

2. Law enforcement bodies using FRT to catch crime suspects.

3. In airports for passenger screening.

4. In corporate offices, to ensure access to authorized personnel.

5. In retail shops, to monitor consumer behavior.

2. What are the ethical issues concerning facial recognition technology?

The rapid rise of facial recognition with clear legal frameworks in place has made several people anxious and legitimately concerned about its potential misuse and abuse. Critics have rightfully raised concerns regarding FRT’s use for mass surveillance, racial profiling, privacy breaches, identity theft, etc.

3. What is a major concern that critics have about emotion-sensing facial recognition ethics?

Concerns regarding emotion sensing in facial recognition stem from the fact that the science behind it is not sound. Emotion-sensing facial recognition can easily be misinterpreted, thus leading to manipulation and discrimination by educational institutions, policing agencies, and hiring companies.

4. Which cities or states in the USA have banned the use of facial recognition?

The following states have either banned or placed a moratorium on facial recognition:

• Portland in Oregon

• Boston in Massachusetts

• San Francisco, in California

• Illinois

• Virginia

5. Do you have the right to refuse facial recognition?

Private entities must ask for your permission or consent to capture your facial data. You can refuse to provide this data to them. However, the same rule does not apply when it comes to law enforcement agencies.

It is also challenging or just impossible to refuse facial recognition systems set up by law enforcement agencies in public spaces.

Conclusion

Facial recognition systems are everywhere. Unbeknownst to many, we’ve either used it or allowed this tech to capture and process our facial data. While avoiding this tech is going to become harder in the future, my hope is for this tech to be better regulated than it is today.

Yes, it can make our lives easier. On the flipside, FRT can encroach on a citizen’s liberties and privacy. Stories of governments using FRT to target protesters or malicious individuals using deepfakes to commit identity theft are all real.

These ethical concerns can only be addressed with clear, tighter regulations rather than the fragmented landscape US citizens are dealing with today. Both private and public agencies need to step up and establish a system of accountability that ensures compliance with ethics and appropriate action when the tech is misused.

Research Process:

The total time involved to complete and publish this article is approximately 38 hours. This content was created through a structured research approach to ensure accuracy and reliability.

For more related guides, you can explore our range of tutorials below:

- A Complete Ethical Hacking Tutorial

- Real-time Speech Emotion Recognition (SER) Using Machine Learning

- What Is Image Processing: A Complete ML and AI Image Guide

- The Top 13 Machine Learning Companies (Updated List)

- Top 11 Popular Machine Learning Software Tools

- 8 Best Digital Forensics Software Tools (Compared)