This Comprehensive Tutorial Explains What is Augmented Reality and how it works. Also learn about the Technology, Examples, History & Applications of AR:

This tutorial starts by explaining the basics of Augmented Reality (AR) including what it is and how it works. We will then look at the main applications of AR, like remote collaboration, health, gaming, education, and manufacturing, with rich examples. We will also cover the hardware, apps, software, and devices employed in augmented reality.

This tutorial will also dwell on the outlook of the augmented reality market and the issues and challenges around the different augmented reality topics.

Table of Contents:

What Is Augmented Reality?

AR allows virtual objects to be overlaid in real-world environments in real-time. The below image shows a man using the IKEA AR App to design, improve, and live his dream home.

[image source]

Augmented Reality Definition

Augmented Reality is defined as the technology and methods that allow the overlaying of real-world objects and environments with 3D virtual objects using an AR device, and allow the virtual to interact with the real-world objects to create intended meanings.

Unlike virtual reality which tries to recreate and replace an entire real-life environment with a virtual one, augmented reality is about enriching an image of the real world with computer-generated images and digital information. It seeks to change perception by adding video, infographics, images, sound, and other details.

Inside a device that creates AR content; virtual 3D images are overlaid on real-world objects based on their geometrical relationship. The device must be able to calculate the position and orientation of objects concerning others. The combined image is projected on mobile screens, AR glasses, etc.

On the other side, there are devices worn by the user to allow viewing of AR content by a user. Unlike virtual reality headsets that completely immerse users into simulated worlds, AR glasses do not. The glasses allow adding, and overlaying of a virtual object onto the real-world object, for instance, placing AR markers on machines to mark repair areas.

A user using the AR glasses can see the real object or environment around them but is enriched with a virtual image.

Although the first application was in military and television since the coining of the term in 1990, AR is now applied in gaming, education and training, and other fields. Most of it is applied as AR apps that can be installed on phones and computers. Today, it is enhanced with mobile phone technology such as GPS, 3G and 4G, and remote sensing.

Types Of AR

Augmented reality is of four types: Markerless, Marker-based, Projection-based, and Superimposition-based AR. Let us see them one by one in detail.

#1) Marker-based AR

A marker, which is a special visual object like a special sign or anything, and a camera are used to initiate the 3D digital animations. The system will calculate the orientation and position of the market to position the content effectively.

Marker-based AR example: A marker-based mobile-based AR furnishing app.

[image source]

#2) Marker-less AR

It is used in events, business, and navigation apps, for instance, the technology uses location-based information to determine what content the user gets or finds in a certain area. It may use GPS, compasses, gyroscopes, and accelerometers as can be used on mobile phones.

Recommended Reading => Comparative Study of WebAR vs Native AR

The below example shows that a Marker-less AR does not need any physical markers to place objects in a real-world space:

[image source]

#3) Project-based AR

This kind uses synthetic light projected on the physical surfaces to detect the interaction of the user with the surfaces. It is used on holograms like in Star Wars and other sci-fi movies.

The below image is an example showing a sword projection in AR project-based AR headset:

[image source]

#4) Superimposition-based AR

In this case, the original item is replaced with an augmentation, fully or partially. The below example allows users to place a virtual furniture item over a room image with a scale on the IKEA Catalog app.

IKEA is an example of superimposition-based AR:

Brief History Of AR

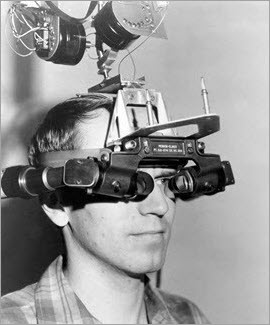

1968: Ivan Sutherland and Bob Sproull created the world’s first head-mounted display with primitive computer graphics.

[image source]

1975: Videoplace, an AR lab, is created by Myron Krueger. The mission was to have human movement interactions with digital stuff. This technology was later employed on projectors, cameras, and on-screen silhouettes.

[image source]

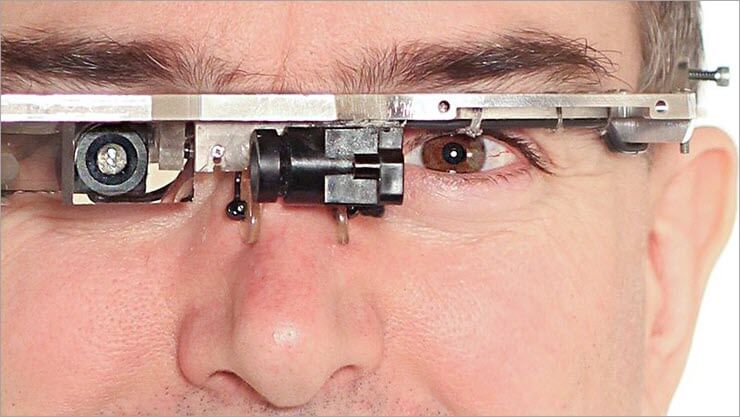

1980: EyeTap, the first portable computer won in front of the eye, developed by Steve Mann. EyeTap recorded images and superimposed others on it. It could be played by head movements.

[image source]

1987: A prototype of a Heads-Up Display (HUD) was developed by Douglas George and Robert Morris. It displayed astronomical data over the real sky.

1990: The term augmented reality was coined by Thomas Caudell and David Mizell, researchers for the Boeing company.

[image source]

1992: Virtual Fixtures, an AR system, was developed by the U.S. Airforce’s Louise Rosenberg.

[image source]

1999: Frank Deigado and Mike Abernathy and their team of scientists developed new navigation software that could generate runways and street data from a helicopter video.

2000: ARToolKit, an open-source SDK, was developed by a Japanese scientist Hirokazu Kato. It was later adjusted to work with Adobe.

2004: Outdoor helmet-mounted AR system presented by Trimble Navigation.

2008: AR Travel Guide for Android mobile devices made by Wikitude.

2013 to date: Google Glass with Bluetooth Internet connection, Windows HoloLens – AR goggles with sensors to display HD holograms, Niantic’s Pokemon Go game for mobile devices.

[image source]

How Does AR Work: Technology Behind It

First is the generation of images of real-world environments. Second, is using technology that allows the overlaying of 3D images over the images of real-world objects. The third is the use of technology to allow users to interact and engage with the simulated environments.

AR can be displayed on screens, glasses, handheld devices, mobile phones, and head-mounted displays.

Also, Read =>> Best AR Smart Glasses

As such, we have mobile-based AR, head-mounted gear AR, smart glasses AR, and web-based AR. Headsets are more immersive than mobile-based and other types. Smart glasses are wearable AR devices that provide first-person views, while web-based do not require the downloading of any app.

Configurations of AR glasses:

[image source]

It uses S.L.A.M. technology (Simultaneous Localization And Mapping), and Depth Tracking technology for calculating the distance to the object using sensor data, in addition to other technologies.

Augmented Reality Technology

AR technology allows real-time augmentation and this augmentation takes place within the context of the environment. Animations, images, videos, and 3D models may be used and users can see objects in natural and synthetic light.

Visual-based SLAM:

[image source]

Simultaneous Localization and Mapping (SLAM) technology is a set of algorithms that solve simultaneous localization and mapping problems.

SLAM uses feature points to help users to understand the physical world. The technology allows apps to understand 3D objects and scenes. It allows tracking of the physical world instantly. It also allows the overlaying of digital simulations.

SLAM uses a mobile robot such as mobile device technology to detect the surrounding environment then create a virtual map; and trace its position, direction, and path on that map. Aside from AR, it is employed on drones, aerial vehicles, unmanned vehicles, and robot cleaners, for instance, it uses artificial intelligence and machine learning to understand locations.

Feature detection and matchings are done using cameras and sensors that collect feature points from various viewpoints. The triangulation technique then infers the three-dimensional location of the object.

In AR, SLAM helps slot and blend the virtual object into a real object.

Recognition-based AR: It is a camera to identify markers so that an overlay is possible if there is a marker detected. The device detects and calculates the position and orientation of the marker and replaces the real-world marker with its 3D version. Then it calculates the position and orientation of others. Rotating the marker rotates the entire object.

Location-based Approach. Here the simulations or visualizations are generated from data collected by GPS, digital compasses, accelerometers, and velocity meters. It is very common in smartphones.

Depth tracking technology: Depth map tracking cameras such as Microsoft Kinect generate a real-time depth map by using different technologies to calculate the real-time distance of objects in the tracking area from the camera. The technologies isolate an object from the general depth map and analyze it.

The below example is of hand tracking using depth algorithms:

[image source]

Natural feature tracking technology: It may be used to track rigid objects in a maintenance or assembly job. A multistage tracking algorithm is used to estimate the motion of an object more accurately. Marker tracking is used, as an alternative, alongside calibration techniques.

The overlaying of virtual 3D objects and animations on real-world objects is based on their geometrical relationship. Extended face-tracking cameras are now available on smartphones such as iPhone XR which has TrueDepth cameras to allow better AR experiences.

Devices And Components Of AR

Kinect AR Camera:

[image source]

Cameras and sensors: This includes AR cameras or other cameras, for instance, on smartphones, which take 3D images of real-world objects to send them for processing. Sensors collect data about the user’s interaction with the app and virtual objects and send them for processing.

Processing devices: AR smartphones, computers, and special devices use graphics, GPUs, CPUs, flash memory, RAM, Bluetooth, WiFi, GPS, etc to process the 3D images and sensor signals. They may measure speed, angle, orientation, direction, etc.

Projector: AR projection involves projecting generated simulations on AR headset lenses or other surfaces for viewing. This employs a miniature projector.

Here is a video: First smartphone AR projector

Reflectors: Reflectors such as mirrors are used on AR devices to help human eyes to view virtual images. An array of small curved mirrors or double-sided mirrors can be used to reflect light to the AR camera and the user’s eye, mostly to properly align the image.

Mobile devices: Modern smartphones are very applicable for AR because they contain integrated GPS, sensors, cameras, accelerometers, gyroscopes, digital compasses, displays, and GPU/CPUs. Further, AR apps can be installed on mobile devices for mobile AR experiences.

The below image is an example which shows AR on iPhone X:

[image source]

Head-Up Display or HUD: A special device that projects AR data to a transparent display for viewing. It was employed first in the training of military but now it is used in aviation, automobile, manufacturing, sports, etc.

AR glasses also called smart glasses: Smart glasses are for displaying notifications for instance, from smartphones. They include Google Glasses, Laforge AR eyewear, and Laster See-Thru, among others.

AR contact lenses (or smart lenses): These are worn to be in contact with the eye. Manufacturers such as Sony are working on lenses with additional features such as the capability to take photos or store data.

AR contact lenses are worn in contact with the eye:

[image source]

Virtual retinal displays: They create images by projecting laser lights into the human eye.

Here is a Video: Virtual Retinal Display

Benefits Of AR

Let us see some benefits of AR for your business or organization and how to integrate it:

- Integration or adoption depends on your use case and application. You may want to employ it for monitoring maintenance and production work, performing virtual walkthroughs of real estate property, advertising products, boosting remote design, etc.

- Today, virtual fitting rooms can help decrease purchase returns and improve purchase decisions made by buyers.

- Salespeople can produce and publish interesting branded AR content and insert ads in them so people can get to know their products when they watch the content. AR improves engagement.

- In manufacturing, AR markers on images of manufacturing equipment help project managers to monitor work remotely. It reduces the need to use digital maps and plants. For instance, a device or machine can be pointed on a location to determine if it will fit on position.

- Immersive real-life simulations are delivering pedagogical benefits to learners. Simulations in game-based learning and training come in with psychological benefits and increase empathy among learners as shown by researchers.

- Medical students can use AR and VR simulations to try first and as many surgeries as possible without hefty budgets or unnecessary injuries to patients, all with immersion and near-real experiences.

The below image depicts how AR is applied in medical training for a surgery practice:

[image source]

- Using AR, future astronauts can try their first or next space mission.

- AR enables virtual tourism. AR apps, for instance, can provide directions to desirable destinations, translate the signs on the street, and provide information on sightseeing. A good example is a GPS navigation app. AR content enables the production of new cultural experiences, for instance, where additional reality is added to museums.

- Augmented reality is expected to expand to $150 billion by 2020. It is expanding more than virtual reality with $120 billion compared to $30 billion. AR-enabled devices are expected to reach 2.5 billion by 2023.

- Developing its own branded applications is one of the most common ways that companies are using to engage with AR technology. Companies can still place ads on third-party AR platforms and content, buy licenses on developed software, or rent spaces for their AR content and audiences.

- Developers can use AR development platforms such as ARKit and ARCore to develop applications and integrate AR into business applications.

Augmented Reality vs. Virtual Reality vs. Mixed Reality

Augmented reality is similar to virtual reality and mixed reality where both attempt to generate 3D virtual simulations of real-world objects. Mixed reality mixes real and simulated objects.

All the cases above use sensors and markers to track the position of virtual and real-world objects. AR uses sensors and markers to detect the position of real-world objects and then to determine the location of simulated ones. The AR renders an image to project to the user. In VR, which also employs math algorithms, the simulated world will then react as per the user’s head and eye movements.

However, while VR isolates the user from the real world to completely immerse them into simulated worlds, AR is partially immersive.

=> Recommended Reading – AR Vs VR: A Comparison

Mixed reality combines both AR and VR. It involves the interaction of both the real world and virtual objects.

Augmented Reality Applications

| Application | Description/explanation |

|---|---|

| Gaming | AR allows for better gaming experiences as gaming grounds are being moved from virtual spheres to include real-life experiences where players can perform real-life activities to play. |

| Retail and Advertisement | AR can improve customer experiences by presenting customers with 3D models of products and helping them make better choices by giving them virtual walkthroughs of products such as in a real estate. It can be used to lead customers to virtual stores and rooms. Customers can overlay the 3D items on their spaces such as when buying furniture to select items best suitable to match their spaces – regarding size, shape, color, and type. In advertising, ads can be included in AR content to help companies popularize their content to viewers. |

| Manufacturing and Maintenance | In maintenance, repair technicians can be directed remotely by professionals to do repairs and maintenance works while on the ground using AR apps without having the professionals travel on the location. This can be useful in places where it is hard to travel to the location. |

| Education | AR interactive models are used for training and learning. |

| Military | AR assists in advanced navigation and to help mark objects in real-time. |

| Tourism | AR, in addition to placing ads on AR content, can be used for navigation, providing data on destinations, directions, and sightseeing. |

| Medicine/Healthcare | AR can help train healthcare workers remotely, help in monitoring health situations, and for diagnosing patients. |

AR Example In Real Life

- Elements 4D is a chemistry learning application that employs AR to make chemistry more fun and engaging. With it, students make paper cubes from the element blocks and place them in front of their AR cameras on their devices. They can then see representations of their chemical elements, names, and atomic weights. Students can bring together the cubes to see if they react and to see chemical reactions.

[image source]

- Google Expeditions, where Google uses cardboards, already allows students from across the world to do virtual tours for history, religion, and geography studies.

- Human Anatomy Atlas lets students explore over 10,000 3D human body models in seven languages, to let students learn the parts, and how they work, and to improve their knowledge.

- Touch Surgery simulates surgery practice. In partnership with DAQRI, an AR company, medical institutions can see their students practicing surgery on virtual patients.

- IKEA Mobile App is famous in real estate and home product walkthroughs and testing. Other apps include Nintendo’s Pokemon Go App for gaming.

Learn more =>> Augmented Reality Application Examples

Developing And Designing For AR

AR development platforms are platforms on which you can develop or code AR apps. Examples include ZapWorks, ARToolKit, MAXST for Windows AR and smartphone AR, DAQRI, SmartReality, ARCore by Google, Windows Mixed Reality AR platform, Vuforia, and ARKit by Apple. Some allow the development of apps for mobile, others for PC, and on different operating systems.

AR development platforms allow developers to give apps different features such as support for other platforms such as Unity, 3D tracking, text recognition, creation of 3D maps, cloud storage, support for single and 3D cameras, support for smart glasses,

Different platforms allow the development of marker-based and/or location-based apps. Features to consider when selecting a platform include cost, platform support, image recognition support, 3D recognition, and tracking is the most important feature, support for third-party platforms such as Unity from where users can import and export AR projects and integrate with other platforms, cloud or local storage support, GPS support, SLAM support, etc.

The AR apps developed with these platforms support a myriad of features and capabilities. They may allow content to be viewed with one or a range of AR glasses that have pre-made AR objects, support for reflection mapping where objects have reflections, real-time image tracking, 2D and 3D recognition,

Some SDK or software development kits allow the development of apps by drag and drop method while others require knowledge in coding.

Some AR apps allow users to develop from scratch, upload, and edit their own AR content.

Conclusion

In this augmented reality, we learned that technology allows the overlaying of virtual objects in real-world environments or objects. It uses a combination of technologies including SLAM, depth tracking, natural feature tracking, and object recognition, among others.

This augmented reality tutorial dwelt on introducing AR, the basics of its operation, the technology of AR, and its application. We finally considered the best practice for those interested in integrating and developing AR.