A comprehensive study of Agile Manifesto and its role in software development. Get to explore more on the values and principles enshrined in the Agile Manifesto in detail:

Our previous tutorial on Agile Methodology explained to us all about Agile models and methodologies in detail.

But until now we aren’t about why there was a need for agile in the first place and how agile overcame the shortcomings of the existing software development methodologies like the waterfall model.

In this tutorial, we will go deeper into the details of Agile and the Agile manifesto. We will see what the manifesto says and what are the values and principles enshrined in it.

Table of Contents:

Agile Manifesto for Software Development

As we saw in our previous tutorial, earlier development methodologies took too much time and by the time the software was ready for deployment, the business requirements would have changed thus not catering to the current needs.

The speed of change which was lacking at that time was causing a lot of problems. When the leaders of different development methodologies met to decide on the way forward, they were able to agree upon a better method and also were able to finalize the wording for the manifesto.

This was captured as 4 values and 12 principles to help the practitioners understand, refer to it, and put it into practice. Then, none of them could have imagined the impact that this would have on the future of project management.

What is Agile Manifesto

The manifesto has been very carefully worded to capture the essence of agile in minimum words and it reads as below:

“We are uncovering better ways of developing software by doing it and helping others to do it. Through this work we have come to the below value:

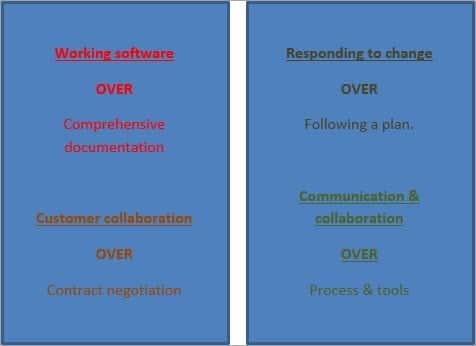

- Individuals and interactions over processes and tools.

- Working software over comprehensive documentation.

- Customer collaboration over contract negotiation.

- Responding to change over following a plan.

That is, while there is value in the items on the right, we value the items on the left more.”

As we can see, these are pretty concise and simple statements and make it very clear as what the founders wanted to promote. Usually, traditional project plans are rigid and they emphasize on procedures and timelines, but the agile manifesto propagates exactly the opposite things.

It prefers:

- People

- Product

- Communication and

- Responsiveness

We will explore this new paradigm which the founders wanted to promote in detail by getting a deeper understanding of the agile values and principles.

4 Agile Values

The four values along with the 12 principles guide the agile software delivery. We will discuss each of the values in detail now.

#1) Individuals and Interactions over Processes and Tools

Individuals and interactions are preferred over processes and tools because they make the process more responsive. If the individuals are aligned and once they understand each other, then the team can resolve any issues with the tools or processes.

But if the teams insist on blindly sticking to the processes then it might cause misunderstandings among the individuals and create unexpected roadblocks thereby resulting in project delays.

That’s why it’s always preferable to have interactions and communication among the team members rather than blindly depending on processes to guide the way forward. One of the ways to achieve this is by having an involved product owner who works and can make decisions in collaboration with the development team.

Allowing individuals to contribute on their own also allows them to showcase freely what they can bring to the table. When these team interactions are directed toward solving a common problem, the results can be quite powerful.

#2) Working Software over Comprehensive Documentation

Traditional project management involved comprehensive documentation which entailed a lag of months. This used to impact the project delivery negatively and the resulting delays were inevitable.

The kind of documentation created for these projects was very detailed and so many documents were created that many of them were not even referred to during the project progress. This was an unnecessary evil with which the project teams used to live with.

But this also exacerbated the problems in delivery. The focus was on documentation to such an extent because the teams wanted to end up with a finished product that was 100% as per the specifications. That’s why the focus was on capturing all the specifications in detail.

But still, the end product used to be quite different from the expectations or would have lost relevance. That is why Agile says that working software is a much better option to gauge customer expectations than heaps of documentation.

This doesn’t imply that the documentation is not necessary. It just means that a working product is any day a better indicator of alignment to the customer needs and expectations than a document created months ago. It also implies that the teams are responsive and ready to adapt to change as and when required while showing the working software to the client when the sprint ends.

Failure to test the product during sprints takes manifold cost and effort in the next sprint. Once the functionality is deployed, the cost of these changes goes up further by a significant degree.

#3) Customer Collaboration Over Contract Negotiation

Negotiation means that the details are still being captured and have not been finalized. There is still scope for renegotiation. But once the negotiation is over, there can be no discussion over it. What agile says is that instead of negotiation, go for collaboration.

Collaboration implies that there is still room for discussion and the communication is ongoing.

Not a one-time thing. What this does is, it gives a two-fold advantage – while it helps the team to do a course correction if required at an earlier stage, it helps the client to also refine their vision and redefine their requirements if required during the project.

The other aspect is that while traditional software development models involve the customer before the development begins during the documentation and negotiation phase, they are not as involved during the project development.

Once the requirements have been frozen, they get to see the product only, once the product is ready. Agile breaks through this barrier as well by allowing for customer involvement over the whole lifecycle.

This helps the agile teams align better with the customer needs. One of the ways to achieve this is through a dedicated and involved product owner who can help the team in real-time for clarifications and aligning the work with the customer priorities

#4) Responding to Change Over Following a Plan

The standard thought process is that the changes are an expensive affair and we should avoid changes at all costs. That’s why the unnecessary focus is on documentation and elaborate plans to deliver by sticking to the timelines and product specifications.

But as experience also teaches us, changes are mostly inevitable, and instead of running from it we should try to embrace it and plan for it.

Agile allows us to do this transition. What agile thinks is that change is not an expense, it is welcome feedback that helps to improve the project. It is not to be avoided but instead, it adds value.

With the short sprints proposed by Agile, the teams can get quick feedback and shift priorities at short notice. New features can be added from iteration to iteration.

Why do we do this? Because most of the features developed using the waterfall approach are never used. This is because the waterfall model follows the plan whereas that is the phase when we know the least.

Agile also plans, but it also follows the just-in-time approach where planning is done just enough when needed. And the plans are always open to change as the sprints progress.

The 12 Agile Principles

12 agile principles were added after the creation of the manifesto to help guide the team’s transition into agile and check whether the practices they are following are in line with the agile culture.

Following is the text of the original 12 principles, published in 2001 by the Agile Alliance:

#1) Our highest priority is to satisfy the customer through the early and continuous delivery of valuable software.

#2) Welcome changing requirements, even late in development. Agile processes harness change for the customer’s competitive advantage.

#3) Deliver working software frequently, from a couple of weeks to a couple of months, with a preference to the shorter timescale.

#4) Business people and developers must work together daily throughout the project.

#5) Build projects around motivated individuals. Give them the environment and support they need, and trust them to get the job done.

#6) The most efficient and effective method of conveying information to and within the development team is a face-to-face conversation.

#7) Working software is the primary measure of progress.

#8) Agile processes promote sustainable development. The sponsors, developers, and users should be able to maintain a constant pace indefinitely.

#9) Continuous attention to technical excellence and good design enhances agility.

#10) Simplicity – the art of maximizing the amount of work not done is essential.

#11) The best architectures, requirements, and designs emerge from self-organizing teams.

#12) At regular intervals, the team reflects on how to become more effective, then tunes and adjusts its behavior accordingly.

These agile principles provide practical guidance for the development teams.

Another way of organizing the 12 principles is to consider them in the following four distinct groups:

- Customer satisfaction

- Quality

- Teamwork

- Project management

#1) Our highest priority is to satisfy the customer through early and continuous delivery of valuable software: Customers are going to be thrilled to see working software being delivered every sprint rather than having to go through an ambiguous waiting period at the end of which only they will get to see the product.

Here the customer can be defined as the project sponsor or the person who is paying for the development. The end user of the product is also a customer but we can differentiate between the two as the end user is referred to as a user.

#2) Welcome changing requirements, even late in development. Agile processes harness change for the customer’s competitive advantage: Changes can be incorporated without much delay in the overall timelines.

Since the agile teams believe in quality above all things, they would rather incorporate changes and deliver as per the customer requirements than avoid changes and deliver a product that does not serve the business needs.

#3) Deliver working software frequently, from a couple of weeks to a couple of months, with a preference for a shorter timescale: This is taken care of by the teams working in sprints. Since sprints are time-boxed iterations and deliver working software at the end of each sprint, customers regularly get an idea of the progress

#4) Business people and developers must work together daily throughout the project: Better decisions are taken when both are working together collaboratively and there is a constant feedback loop between the two for course correction and change agility. Communication among the stakeholders is always the key to agile.

#5) Build projects around motivated individuals. Give them the environment and support they need, and trust them to get the job done: You have to support, trust, and motivate the teams. A motivated team is more likely to be successful and will deliver a superior product than unhappy teams who are not willing to give their best.

One of the ways to do this is to empower the development team to be self-organized and make their own decisions.

#6) The most efficient and effective method of conveying information to and within the development team is a face-to-face conversation: Communication is better and more impactful if the teams are in the same location and can meet face-to-face for discussions. It helps to build trust and brings understanding among various stakeholders.

#7) Working software is the primary measure of progress: A working software beats all the other KPIs and is the best indicator of the work done.

#8) Agile processes promote sustainable development. The sponsors, developers, and users should be able to maintain a constant pace indefinitely: Consistency of delivery is emphasized. The team should be able to maintain their pace throughout the project and not burn out after the first few sprints.

#9) Continuous attention to technical excellence and good design enhances agility: The team should have all the skills and a good product design to handle the changes and produce a high-quality product while being able to incorporate changes

#10) Simplicity: The art of maximizing the amount of work not done is essential and is just enough to meet the definition of done.

#11) The best architectures, requirements, and designs emerge from self-organizing teams: Self-organized teams are empowered and take ownership of their work. This leads to open communication and regular sharing of ideas among the team members.

#12) At regular intervals, the team reflects on how to become more effective, then tunes and adjusts its behavior accordingly: Self-improvement leads to quicker results and lesser rework.

Mindset Change of an Agile Tester

“Testing in Agile” is not a different technique, rather it’s a change in mindset, and change does not happen overnight. It requires knowledge, skill, and proper mentoring.

Aligning the agile tester with Agile Manifesto plays a great role in understanding the tester’s mindset. The Change in Mindset of an Agile Tester” has been communicated effectively in the below section.

Aligning Agile Tester with Agile Manifesto

The term Agile means “Flexible”, or “able to move quickly”.

Agile testing is NOT a new technique for testing, rather being Agile means developing a change in the mindset of delivering a testable piece. Let’s discuss more into Agile testing to understand the origin and the philosophy behind Agile.

Before the world moved to Agile, Waterfall was the dominant methodology in the software industry. I am not trying to explain the Waterfall model here but am quoting the pointers on some of the practices followed by the team on implementing a particular feature.

All these pointers are based on my experience and may have a disparity in opinion.

So here we go…

- Developers and QAs worked as separate teams (Sometimes as Rivals 🙂 )

- The same required document was referred to by developers and QA simultaneously. Developers did their designing and coding and QAs did their test case writing referring to the same required document. Planning and execution were done in silos

- The test case reviews were solely done by the QA leaders. Sharing the QA test cases with the developers was not considered a good practice. (Reason: The developers would code based on the test cases and the QAs would lose on the defects)

- Testing was considered the LAST activity of an implementation cycle. Most of the time, QAs would get the feature in the last stage and be expected to complete the entire testing in a very limited time. (And the QAs did it)

- The only goal of the QAs was to identify bugs & defects. The overall performance of the QAs was judged based on the number of valid bugs/defects they submit

- STLC and Defect lifecycle followed while in execution. Email communication preferred

- Automation is considered an end activity and is mostly focused on UI. Regression suit was considered the best candidate for Automation

Yes, following these practices did have its drawbacks:

- Because the teams worked in silos, the only medium of communication between the developers and QA was “Bugs & defects”

- The only scope of QA was to write and execute test cases on the finished product

- There was very little to no scope for QAs to view the code or interact with the developers or business

- Because the entire chunk of the product was released at once, there was a huge responsibility on the QAs during the time of production. QAs were generally considered quality gatekeepers, and if anything went wrong in production, the entire blame was put on QAs

- Apart from the functional testing, Regression testing of the entire product was also an additional responsibility of the QAs which comprised a huge number of test cases

Out of all the drawbacks, the major disadvantage was that of “Loss of focus from the ultimate goal of delivering a good quality product at a sustainable pace”.

The ultimate goal of the team (developers + testers) is to deliver good quality software that would meet the customer requirements and is fit for use. Because of the big time and increased time to market duration, the focus blurred, and the only objective that sustained was to finish the implementation and move the code to UAT.

QAs concentrated only on the test case execution (put a tick mark against the test case checklist), made sure the bugs/defects were closed or deferred, and moved on to a different project/module. So, from QA’s perspective, the focus was not on speed and quality of delivery but on completing the test case execution (and of course some automation).

Agile Philosophy

It all started in early 2001 when a group of 17 professionals met at Utah (USA) to ski, eat, relax, and have a quality discussion; what came out was the Agile Manifesto.

As a Quality professional, it is important that we too understand the essence of the manifesto and try to shape our thought process accordingly:

Let’s try to align our thought process of testing the software with the Agile manifesto, but before I do that, let’s understand one thing: in Agile, the teams are cross-skilled and everybody in the team contributes towards the development of the product/feature.

Therefore, I prefer to call the entire team the “Development team”, which includes programmers, testers, and business analysts. Henceforth, I will be using the terms: Developers Programmers & QA Testers.

#1) Working Software OVER Comprehensive Documentation

The ultimate goal in Agile development is to deliver potentially shippable software/increments in a short period, which means that the time to market is the key. Having said that, it does not mean that quality is at stake. Because there is less time to market, it is important that the testing strategy and planning of the execution be more focused on the overall quality of the system.

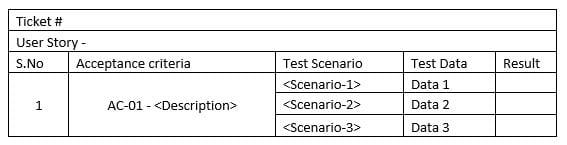

Testing is an endless job and it can go on and on, testers have to determine certain parameters of which they can give a green signal for the product to be moved to production. To do so, it’s important that testers equally involve themselves while deciding the “Definition of ready (DOR) and Definition of Done (DOD)” and not forget to decide the “Acceptance Criteria of the story”.

Test scenarios and test cases should revolve around the definition of the Done and Acceptance Criteria of the story. Instead of writing exhaustive test cases and including that information in the test cases that are seldom used, the focus should be more on the precise and to-the-point scenarios. I have used the below template to write my test cases.

The point here is to include only the information in the test scenarios/cases that are required and add value to the cases.

#2) Customer Collaboration OVER Contract Negotiation

Let’s communicate directly with the customer regarding our testing approach and try to be transparent in sharing the test cases, data, and results.

Have frequent feedback sessions with the customer and share the test results. Ask the customer if they are good with the test results or if they want any specific scenarios to be covered. Let’s not try to restrict ourselves to asking questions and seeking clarification from only the product owner/business to understand the functionality and business.

The deeper our understanding of the features we have, the more precise coverage we have on testing.

#3) Responding to Change OVER Following a Plan

The only Constant thing is Change!!

We cannot control change and we have to understand and accept the fact that there will be changes in the features and requirements; we have to adapt and implement.

The frequent change in requirements is very well adopted in Agile, hence in a similar fashion, as testers, we need to keep our test plan and scenarios flexible enough to accommodate new changes.

Traditionally, we create a test plan and the same is followed throughout the lifecycle of the project. Instead, in Agile, the plan has to be dynamic and moulded as per the requirements. Again, the focus should be on meeting the Definition of Done and the Acceptance criteria of the story.

I don’t see a need to create a test plan for every story; instead, we can create a test plan at an epic level. Just like epics are written and being worked upon, simultaneous efforts can be put into the creation of Test Plans for the same. There may or may not be a defined template for it. Just to make sure we have coverage of the quality aspect of Epic entirely.

Try to utilize the PI (Product Increment) planning days to determine the high-level test scenarios for the story based on the definition of done and acceptance criteria.

#4) Communication & Collaboration OVER Process & Tools

Testers are very much process-oriented (which is perfectly fine), but we should keep in mind that instead of following a process, the turnaround time/response time for the issue is not impacted.

If the team is co-located, any issues can be resolved by direct communication. Perhaps we have daily stand-ups that provide a good platform to resolve issues. It’s important to log a defect, but it should be done only for tracking purposes.

Testers should pair up with the programmers and collaborate to resolve the defect. Product owners can also be pulled in if needed. Testers should actively and proactively participate in TDD (Test Driven Development) and should collaborate with the programming team to share the scenarios and try to identify the defects at the unit level itself.

Conclusion

The customer centricity and focus on communication have brought success to Agile which is visible today.

It is a proven technique with implications not just in software delivery but in other industries as well and today it has become an industry in itself.

Our upcoming tutorial in this series will explain more about the Scrum Team along with their roles!!